Tesla Details its Latest Autopilot Supercomputer

Advanced driver assistance systems like Tesla’s Autopilot use a lot of hardware to collect, store and use data, as shown in a recent post from one of Tesla’s engineering managers.

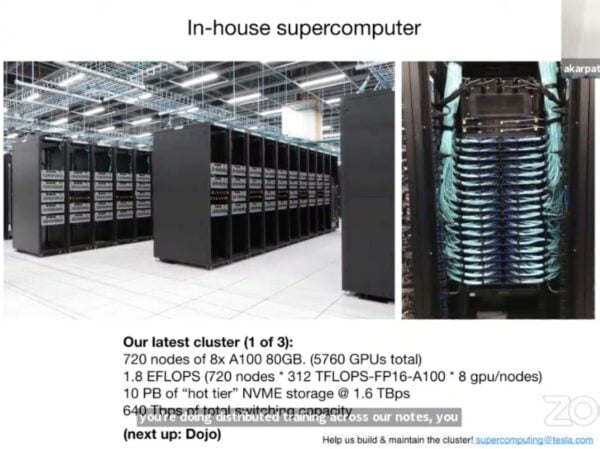

Tesla Autopilot Artificial Intelligence Engineering Manager Tim Zaman shared photos from of the company’s third and most recent cluster of Autopilot supercomputers (via u/tsla4k).

The computers include a total of 720 nodes of 8x 80 GB A100 GPUs totaling 5,760 units, as well as 10 PB of “hot tier” NVME storage, running at 1.6 TBps.

At #Tesla Autopilot we are bringing up our 3rd cluster – one of the biggest supercomputers in the world. We're scaling fast and hiring, if interested please DM (ml/py/cpp/gpus/cuda/react/devops/sre) https://t.co/EwN5vHZaCe pic.twitter.com/H5QiLkklCf

— Tim Zaman (@tim_zaman) June 21, 2021

In the post, Zaman also said the company was “scaling fast and hiring,” and to DM him if interested. Specifically, Zaman went on to list those with skills in CPP, GPUs, CUDA, React, DevOps, or SRE – including a link to the streamed CVPR 2021 Autonomous Driving Workshop from which the shots came.

In general, anyone interested in working on physical-word AI problems, should consider joining Tesla. Fastest path to deploying your ideas irl.

— Elon Musk (@elonmusk) June 21, 2021

Hosted by Tesla Senior Director of Artificial Intelligence Tesla Andrej Karpathy, the video explains how large-scale computing relates to any automakers looking to succeed in autonomous driving.

The video also included a line of text encouraging onlookers to reach out to “help us build & maintain the cluster,” including the email su************@***la.com, to which interested parties can reach out with job candidate information.

Tesla recently started rolling out vehicles with camera-based vision, rather than its previous vehicles which were outfitted with some radar hardware elements.

Want to see more of our stories on Google?

P.S. — Buying a new Tesla? Click here to save $1,000 USD, while supporting independent news.

Help support us by shopping on Amazon here.

Links in this post are affiliate links, so we earn a tiny commission at no charge to you. Thanks for supporting independent media!